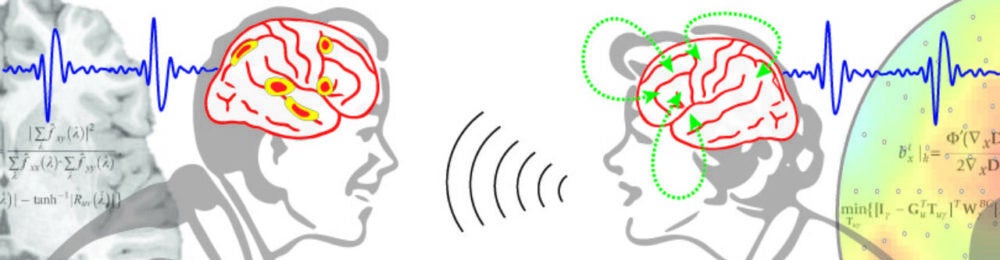

We use behavior, biosignals (EEG, EMG), and machine learning to i) understand the basic brain mechanisms that support comprehension, speech production, and manual communicative gestures, ii) diagnose how different individuals fail to comprehend or communicate, and iii) treat impairments with assistive devices that cope with dynamic, realistic situations across different ability levels and across the life span. As a result, our work is highly collaborative with a strong clinical-translational orientation.

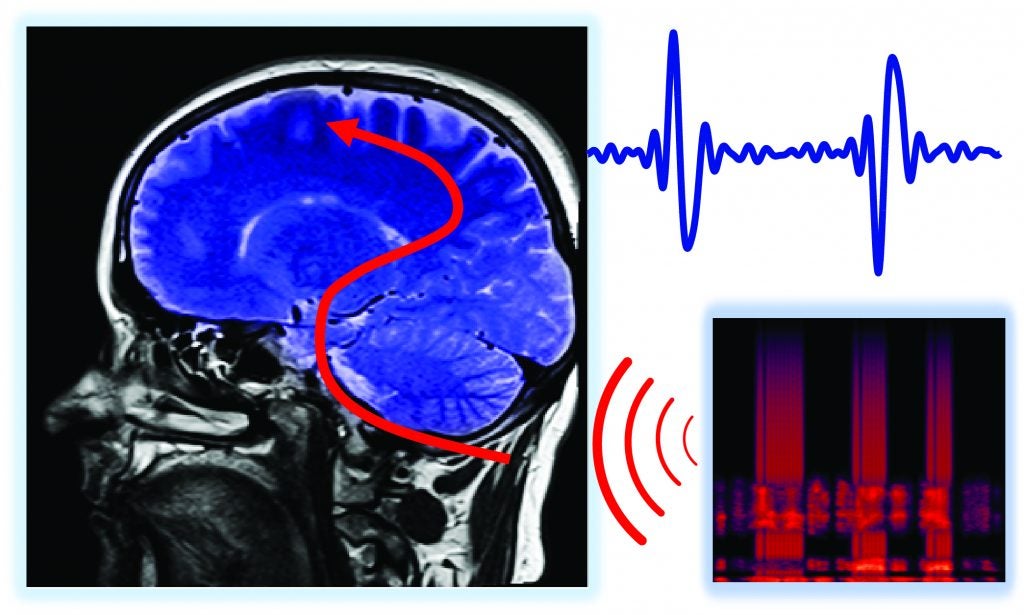

A Brain-based Hearing Loss Diagnostic

We have developed a patented approach to rapidly diagnose the neural hallmarks of hearing loss, from peripheral impairment to central, cognitive factors. Combining natural speech acoustics with acoustic chirps, this Cheech (chirp-speech) can be used in any real-world perceptual, linguistic, or cognitive test. We are presently applying this engineered speech in older adults with hearing loss and — in collaboration with Dr. David Corina — to characterize auditory/speech development and auditory-visual plasticity in children with cochlear implants (see below).

Cochlear Implants and Neural Plasticity

In this project, we collaborate closely with my Center for Mind & Brain colleague Prof. David Corina. We are investigating inter-individual differences in auditory-visual plasticity in relation to children’s pre-implant language experience and eventual language outcomes. Among other insights, our work challenges the long held idea that early auditory deprivation leads to an auditory cortex that is disordered and remapped by visual influences. Rather, we show that cortical activity following implantation reflects alterations to visual-sensory attention and saliency mechanisms. This project has immediate relevance for treating deafness early in life, particularly with regard to the modality of language exposure (oral vs sign).

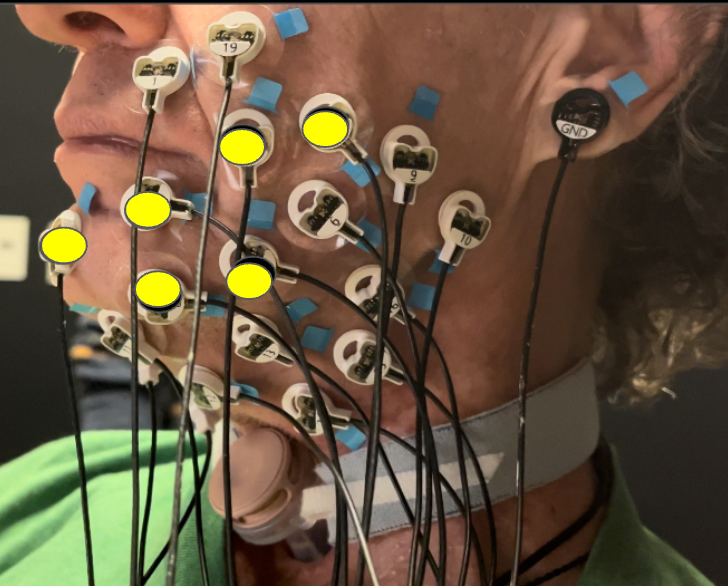

“Silent Speech”: Restoring speech production

As many as 100 million individuals worldwide have lost the ability to produce natural, comprehensible speech (from head/neck cancer, stroke, Parkinson’s disease, etc.). Presently, there is no way to restore natural speech ability in these individuals. We are developing an assistive device that enables fluent, own-voice speech production using “silent speech”: by combining muscle (surface EMG or sEMG) recordings with video of the face, decoding those signals with machine learning, and synthesizing intelligible speech output.

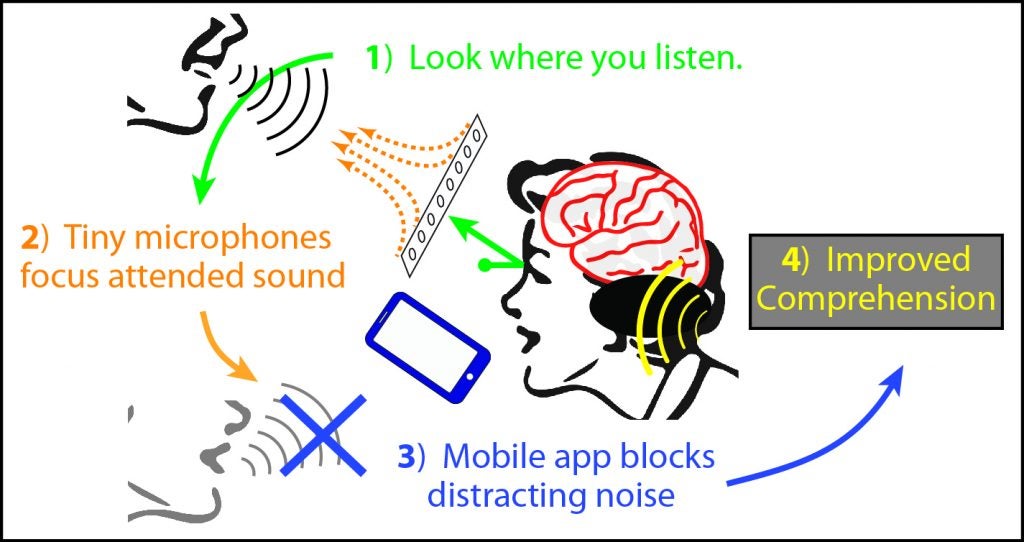

A Super-Hearing Aid

This project aims to treat impaired comprehension with an assistive device that uses eye gaze tracking and microphone array beamforming to serve as an “attentional prosthesis”: wherever a listener looks, she will hear that sound best. The system has been implemented on a mobile platform (Android) and incorporates virtual 3-D acoustic cues to improve real-world comprehension both in individuals with hearing loss as well as healthy listeners.

Fairness and accessibility in neural-biosignal interfaces

Neuromotor interfaces such as sEMG “smart watch” sensors promise to be the most significant update to human-computer interaction since the invention of the mouse. However these interfaces do not work well for many users who differ in age, body mass, skin condition, and other characteristics. With the support of Meta’s Ethical Neurotechnology program, we are developing neuromotor interfaces that are fair and unbiased across different population groups (e.g. the very old, or those with high BMI). Our efforts were cited in Meta’s press release, “A Look at Our Surface EMG Research Focused on Equity and Accessibility”.

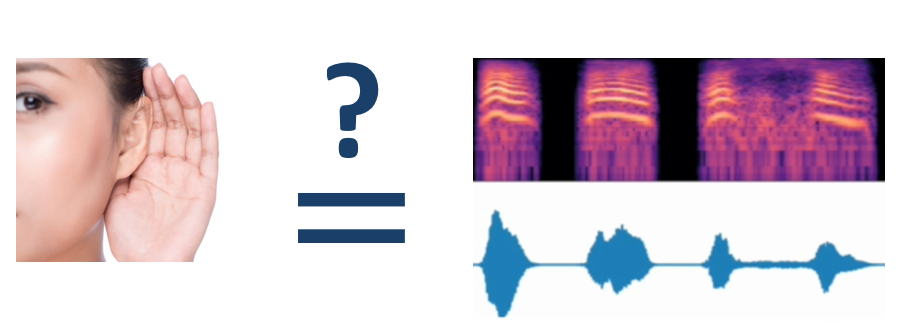

“AI Listener”

This project aims to build a human-validated automatic “AI-Listener” to evaluate speech on numerous perceptual and linguistic levels. The tool presently has two application areas: digital humanities (in collaboration with Marit MacArthur) and clinical speech restoration. In the clinical domain, we are refining our “AI Listener” to improve synthesized speech output for those with neuromotor disease and injury. This applies to non-invasive neuromotor decoding as in our “silent speech” project above, and to leading edge invasive speech-BCI (brain-computer interfaces) in collaboration with Sergey Stavisky and Dr. David Brandman.

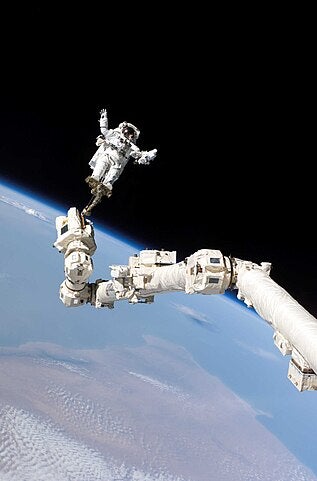

Sensory-motor learning, coordination, and cognition

In this area of research, I collaborate with many colleagues including Sanjay Joshi, Jonathan Schofield, Wil Joiner, Steve Robinson, and Rich Whittle. These projects address numerous unsolved challenges in human-machine teaming and co-adaptation, autonomy and trust, bimanual coordination, and learning to use additional motor effectors (such as supernumerary limbs). Applications range from everyday human-machine interactions, to prosthetics, to working in challenging or adverse environments such as space flight.